DeepMind, a company that developed AlphaGo, which defeated the world champion in the game of Go, and successfully predicted the protein structures of multiple species with AlphaFold, has made groundbreaking contributions to humanity through its AI innovations. Now, let’s take a look at the exploratory work DeepMind has undertaken in the field of general AI for robots.

One major challenge in developing robot technology is the significant effort required to train machine learning models for each robot, task, and environment. Recently, Google’s DeepMind team, along with 33 other research organizations, has initiated a new project aimed at creating a universal AI system to address this challenge. It is reported that this system can collaborate with various physical robots and successfully perform a wide range of tasks.

Pannag Sanketi, a senior software engineer at Google’s robotics division, stated in an interview, “We have observed that robots excel in specialized domains but lack versatility in general AI. Typically, one needs to train a separate model for each task, each robot, and each environment, starting from scratch to fine-tune every variable.”

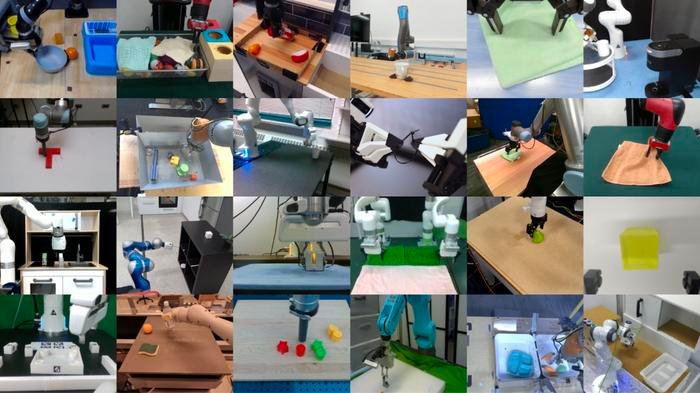

To overcome this issue and make robot training and deployment easier and faster, Google’s DeepMind has introduced two key components in a large-scale shared database project called Open X-Embodiment: a dataset containing data from 22 different types of robots, and a series of models (RT-1-X) capable of transferring skills across multiple tasks. RT-1-X is a robot transformer model based on RT-1, designed to drive robot technology at scale in real-world environments.

In the development of the Open X-Embodiment dataset, researchers demonstrated over 500 skills and 150,000 tasks in more than one million scenarios. This dataset is considered one of the most comprehensive robot datasets of its kind.

In addition, researchers tested the models in robot laboratories and on various physical devices, finding that the new approach indeed outperforms traditional robot training methods. Typically, different types of robots require specialized software models due to their unique sensors and actuators. However, Open X-Embodiment operates on the premise that combining data from different robots and tasks can create a universal model that outperforms specialized models, capable of driving all types of robots. This concept is inspired in part by large language models (LLMs), where using large, general datasets for training can lead to better performance than training on specific, smaller, targeted datasets.

To create the Open X-Embodiment dataset, the research team collected real data from 22 robots with physical embodiments from 20 different institutions worldwide. The dataset comprises over one million scenarios (where each scenario represents a series of actions the robot takes when attempting a task), covering over 500 skills and 150,000 task examples.

The accompanying models are based on the Transformer architecture, a deep learning framework also used in large language models. RT-1-X is built upon Robotics Transformer 1 (RT-1) and is a multi-task model designed for scaling robot technology in real-world environments. RT-2-X is built upon RT-2, the successor of RT-1, and is a visual language action (VLA) model capable of learning from robot and web data and responding to natural language commands.

Researchers tested RT-1-X on five common robots in five different research labs for various tasks. Compared to specialized models developed for these robots, RT-1-X achieved a success rate 50% higher in tasks like object grasping, object manipulation, and door opening. The model can also transfer skills across different environments, a capability not achievable with specialized models trained in specific visual scenes. It’s evident that models trained from diverse datasets outperform specialized models in most tasks. The paper also mentions that this model is applicable to various types of robots, from robotic arms to quadrupeds.

Sergey Levine, Associate Professor at the University of California, Berkeley, and co-author of the paper, stated, “For anyone who has ever worked with robots, they can appreciate how incredible this is: models ‘never’ succeed on their first attempt, but this model did.”